Beyond the Token: Is VL-JEPA the End of the LLM Era?

Meta’s VL-JEPA research challenges the foundations of modern AI. Explore why leading researchers believe token-based language models may not be the future of intelligence.

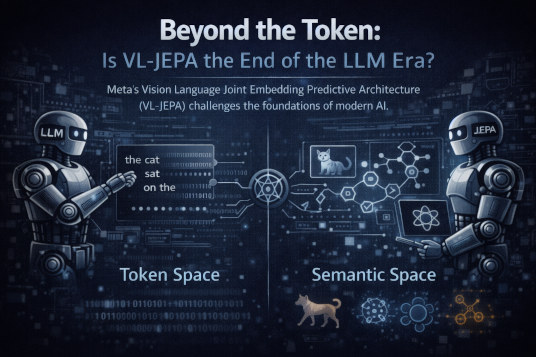

On December 11, 2025, Meta published a research paper that did not simply introduce another model into an already crowded AI ecosystem; it questioned the philosophical trajectory of the entire field. Co-authored by Yann LeCun, one of the central architects of modern deep learning, the paper introduced Vision Language Joint Embedding Predictive Architecture — VL-JEPA. While the broader public was still captivated by increasingly fluent language models such as GPT-4, Llama, and Gemini, this release quietly suggested something far more unsettling: perhaps we have mistaken eloquence for intelligence. For years, the dominant assumption in artificial intelligence has been that scaling language models — increasing parameters, expanding datasets, extending context windows — would inevitably lead us toward general intelligence. VL-JEPA does not refine that path. It questions whether the path itself is misaligned.

To understand why this matters, we must confront the architecture underlying today’s dominant systems. Large Language Models operate through auto-regression, a deceptively simple yet deeply constraining mechanism. When an LLM generates a response, it does not conceptualize an answer in its entirety before expressing it. It does not internally construct a structured mental model and then translate it into language. Instead, it predicts one token at a time, each word conditioned on the statistical probabilities of the words that precede it. The first word is generated based on the prompt. The second word is generated based on the first. The third word is generated based on the first two. The process repeats until a stopping condition is reached. At no moment does the model explicitly hold a complete representation of where the argument is going. It is, in a literal sense, improvising continuously. The fluency of the output creates the illusion of foresight, but the mechanism itself is sequential and reactive.

This sequential generation has profound consequences. First, it means there is no inherent planning stage. Human reasoning typically involves constructing an internal conceptual scaffold before articulating it. When writing an essay, solving a proof, or explaining a theory, we often grasp the structure of the conclusion before we begin speaking. Language becomes the vehicle of expression rather than the medium of thought itself. Auto-regressive models invert this order. They do not think and then speak; they speak and discover what they are thinking along the way. This produces remarkable mimicry, but mimicry is not the same as comprehension. It produces coherence, but coherence is not the same as understanding.

Second, the token-by-token approach is computationally expensive in a way that scaling cannot fundamentally fix. Each generated token requires a forward pass through billions of parameters. Every additional word compounds inference cost. The system cannot jump directly to a conceptual destination; it must traverse the probability landscape step by step. Scaling such architectures has required enormous computational infrastructure, specialized hardware, and escalating energy consumption. Yet even as models grow larger, their reasoning limitations remain structurally embedded in the mechanism of sequential prediction. More parameters increase fluency and pattern recognition capacity, but they do not convert statistical sequence modeling into conceptual reasoning.

The third and perhaps most critical limitation is epistemological. Language models learn correlations in text. They learn how words statistically relate to other words. They internalize vast textual representations of how humans describe the world. But descriptions are not the world itself. Learning that “objects fall due to gravity” appears frequently in text is not the same as modeling gravity as a causal phenomenon. The distinction is subtle yet essential. An LLM can describe gravity convincingly because it has absorbed linguistic patterns about gravity. But it does not simulate physical dynamics unless that simulation is encoded indirectly through textual exposure. It learns discourse about physics rather than physics itself. The result is a system that can speak with confidence about causal relationships without possessing an internal world model grounded in causal structure.

This is where LeCun’s long-standing critique becomes decisive. His argument has consistently been that intelligence does not emerge from predicting the next word. Intelligence emerges from modeling the structure of reality — from constructing internal representations of objects, relationships, cause and effect, persistence, motion, and interaction. Human cognition operates in conceptual abstractions long before language becomes involved. Infants demonstrate object permanence before they speak. They understand basic physical regularities before they master grammar. Language, in this sense, is an interface layered atop deeper cognitive structures. If artificial systems are to approximate general intelligence, they must develop analogous world models rather than remain confined to statistical token spaces.

VL-JEPA represents an attempt to move in that direction. Unlike generative models that attempt to reconstruct missing pixels in images or missing tokens in text, VL-JEPA operates by predicting semantic embeddings — abstract representations of meaning. Instead of asking, “What exact word comes next?” it asks, “What conceptual state should exist here?” Instead of reconstructing surface detail, it predicts latent structure. This shift from surface generation to embedding prediction may appear incremental from a technical standpoint, but philosophically it is transformative. It reframes AI not as an engine of probabilistic reconstruction but as a system of predictive abstraction.

To understand the magnitude of this shift, we must consider the difference between token space and semantic space. In token space, the fundamental units are words or sub-words. Relationships are statistical and sequential. Meaning emerges indirectly through co-occurrence patterns. The system’s internal geometry is organized around linguistic frequency distributions. In semantic space, however, units represent abstract concepts. Similar ideas cluster naturally, independent of the specific words used to describe them. The sentences “a dog is running” and “a puppy is playing” occupy nearby regions because their conceptual structures overlap, not merely because their words frequently co-occur. The system organizes knowledge according to meaning rather than syntax.

This reorganization has profound implications. If a model can operate directly in semantic space, it can reason about relationships between ideas without committing to a sequence of words. It can model how a falling object relates to gravity without generating a textual explanation of gravity. It can capture the persistence of objects across time without narrating that persistence linguistically. In effect, it begins to approximate a world model — an internal simulation of how reality behaves.

The concept of a world model is central here. A world model does not merely label objects; it predicts how they interact. It encodes causality, temporal evolution, and structural constraints. It understands that if a glass is pushed off a table, it will fall and potentially shatter. It understands that occluded objects continue to exist. These are not linguistic correlations; they are causal inferences. Building such models requires learning from sensory structure — images, motion, spatial relationships — not just from textual description. VL-JEPA’s joint embedding architecture attempts to unify vision and language into a shared conceptual space, grounding abstract reasoning in perceptual structure.

Does this render large language models obsolete? Not necessarily. Instead, it repositions them. LLMs excel at linguistic translation, summarization, and conversational fluency. They are extraordinary interfaces between structured representations and human expression. But they may not be the core engines of reasoning in future systems. Instead, world models may handle planning, simulation, and conceptual reasoning, while language models act as communicative layers that translate abstract embeddings into natural language. In such an architecture, language becomes expressive rather than foundational.

The broader implication is that we may be witnessing the end of an illusion — the illusion that scaling token prediction will inevitably converge toward human-level intelligence. The progress of the past few years has been astonishing, but it has also been narrow. It has optimized surface fluency to unprecedented levels. What VL-JEPA suggests is that intelligence may require a different axis of advancement — one focused on abstraction, causality, and predictive structure rather than sequential reconstruction.

If this trajectory continues, we may look back at the LLM era not as a mistake but as an essential stepping stone. Autoregressive models demonstrated that neural networks could internalize vast swaths of human language and generate coherent discourse. They proved that scaling works — within certain bounds. But they may ultimately be remembered as transitional architectures, bridging pattern recognition and world modeling.

The shift from token prediction to semantic prediction is not simply technical refinement. It is a redefinition of what we mean by understanding. Predicting the next word is impressive. Predicting the next state of the world is intelligence. If VL-JEPA succeeds, the center of gravity in artificial intelligence will move away from fluent text generation and toward conceptual simulation. Language will remain important, but it will no longer be mistaken for thought itself.

And that distinction — between speaking about the world and modeling the world — may define the next era of AI.

Engineering Team

The engineering team at Originsoft Consultancy brings together decades of combined experience in software architecture, AI/ML, and cloud-native development. We are passionate about sharing knowledge and helping developers build better software.

Related Articles

Generative AI in Production Systems: What Developers Must Get Right

Moving Generative AI from demos to production is no longer about prompts. In 2026, success depends on architecture, cost discipline, observability, and trust at scale.

From Zero to MLflow: Tracking, Tuning, and Deploying a Keras Model (Hands-on)

A hands-on, copy-paste-ready walkthrough to track Keras/TensorFlow experiments in MLflow, run Hyperopt tuning with nested runs, register the best model, and serve it as a REST API.