CI/CD Pipeline Best Practices for Modern Teams

Build reliable, fast CI/CD pipelines that your team will love. From testing strategies to deployment patterns, this guide covers it all.

CI/CD Pipeline Best Practices for Modern Teams

Build reliable, fast CI/CD pipelines that your team will actually trust. From testing strategies to deployment patterns, this guide covers the architectural, cultural, and operational shifts that define modern delivery.

This is not a checklist for adding more YAML to your repository. It is a strategic blueprint for engineering leadership operating in 2026. The definition of a “good” pipeline has fundamentally changed. Automation alone is no longer differentiating. Nearly every organization has automated builds and deployments. What separates high-performing teams from the rest is whether their pipeline behaves like a passive script — or like an adaptive system.

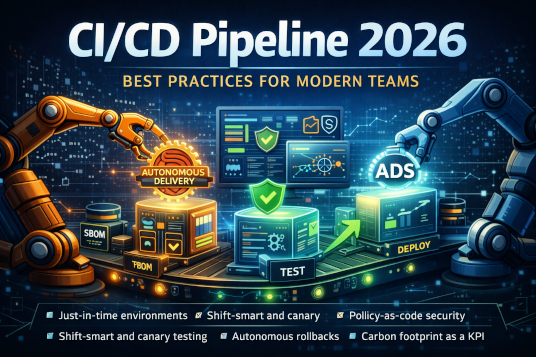

We are no longer building CI/CD pipelines. We are building Autonomous Delivery Systems (ADS): platforms that evaluate intent, assess risk, enforce governance, reason about system state, and act with minimal human intervention. In this paradigm, the pipeline is not a conveyor belt; it is an intelligent control layer for production software. It makes decisions continuously. It adapts to context. It enforces standards automatically. And most importantly, it reduces cognitive load for developers rather than adding to it.

If you are scaling a team this year, the standards outlined here are not optional enhancements. They are structural requirements. Teams that fail to modernize delivery architecture will find themselves constrained not by talent or ambition, but by friction embedded deep within their deployment process.

The 2026 Guide to Autonomous Delivery: Building CI/CD Pipelines for the Next Decade

In the early 2020s, the dominant narrative around CI/CD centered on “automating the boring stuff.” Pipelines were YAML-encoded runbooks that replaced manual steps with scripted ones. They improved consistency, reduced human error, and shortened release cycles. But they still required intervention. Engineers babysat failing builds, manually provisioned environments, negotiated release windows, and interpreted ambiguous test results. Automation existed, but autonomy did not.

By 2026, this model is obsolete. Delivery systems have evolved from reactive automation to intent-based orchestration. Developers no longer define how code should traverse each stage of the pipeline. Instead, they declare the conditions that must be satisfied before release — performance thresholds, policy constraints, compliance gates, dependency integrity. The pipeline determines the safest and most efficient path to meet those conditions.

This shift represents a profound inversion of responsibility. The human defines objectives. The system determines execution strategy. If your team is still manually triaging flaky builds, negotiating deployment timing across Slack threads, or maintaining static staging clusters that drift from production, you are operating at a structural disadvantage. Autonomous Delivery Systems are not about speed alone; they are about systemic trust.

1. Architectural Foundation: The End of Static Infrastructure

The architectural foundation of modern CI/CD begins with a rejection of static environments. In 2026, the traditional concept of staging is considered a legacy artifact. Static environments inevitably drift. Configuration changes accumulate silently. Dependencies update asynchronously. Over time, staging becomes a shadow of production rather than a mirror of it. This divergence erodes confidence and introduces subtle deployment risks.

Modern pipelines rely on Just-in-Time (JiT) environments. For every meaningful change — typically every pull request — a full-stack environment is provisioned dynamically, validated rigorously, and destroyed immediately after use. Infrastructure is treated as disposable and reproducible. This eliminates environmental snowflakes and forces infrastructure definitions to remain declarative and version-controlled.

The Ephemeral Cluster Pattern

The Ephemeral Cluster Pattern has become a foundational standard. Rather than sharing long-lived clusters across teams, each change is tested in isolation. Technologies such as virtual clusters (vclusters) allow Kubernetes-native environments to be instantiated rapidly, maintaining namespace isolation and resource boundaries without the overhead of full cluster provisioning.

This approach does more than improve reliability; it changes organizational behavior. Developers gain confidence that their changes are validated in production-like conditions. Platform teams eliminate the hidden maintenance cost of shared staging systems. Most importantly, isolation reduces cross-team interference, enabling parallel velocity at scale.

Modern systems also confront the realism-versus-cost tradeoff in microservice architectures. Spinning up hundreds of services per pull request is financially impractical. Instead, pipelines incorporate Agentic Service Mocking. These intelligent mocking systems analyze real traffic patterns and generate high-fidelity behavioral replicas of dependencies — including latency characteristics, error distributions, and edge-case responses. Testing becomes realistic without becoming prohibitively expensive.

Data Synthesis and Privacy

The regulatory landscape has permanently altered how teams handle test data. With the Global AI & Data Privacy Act of 2025, copying production data into testing environments is both a legal liability and a reputational risk. Modern pipelines integrate data synthesis as a first-class stage.

Rather than duplicating user data, pipelines analyze statistical distributions from production systems and generate synthetic datasets that preserve relational integrity and behavioral realism. Techniques such as differential privacy ensure that no individual record can be reconstructed from the synthetic output. Testing remains robust, but compliance risk is eliminated. Data is treated as an engineered artifact, not a byproduct.

2. Shift-Smart Testing: The Intelligence Layer

Testing philosophy has shifted from volume to relevance. Running every test on every commit was once considered thorough. Today, it is considered inefficient. Large codebases may contain tens of thousands of tests, but most changes affect only a narrow portion of the system.

Predictive Impact Analysis (PIA)

Predictive Impact Analysis transforms testing from brute-force execution to targeted validation. A CI agent maintains a real-time dependency graph of the codebase — mapping functions, modules, services, and external integrations. When a change is introduced, the agent calculates the potential blast radius. Only tests intersecting with impacted components are executed.

This dramatically reduces build times while maintaining coverage integrity. Instead of running 10,000 tests indiscriminately, the pipeline may run the precise 50 that matter. Speed increases without sacrificing confidence. Testing becomes strategic rather than exhaustive.

Flake-as-a-Service

Flaky tests once drained developer morale and productivity. In modern systems, they are treated as infrastructure signals rather than individual burdens. When a test fails, a local model analyzes the stack trace, compares it against historical failure patterns, and classifies the issue. Known flakes — timeouts, transient dependency failures, network jitter — are automatically labeled and quarantined. The build proceeds while the platform team receives a structured remediation ticket.

Developer velocity is preserved. Infrastructure debt is tracked systematically. Flakiness is managed as a service, not endured as a nuisance.

3. Shadow Traffic and “Dark” Launches

Before any release becomes visible to users, it must first survive reality under controlled exposure. Synthetic tests and preview environments cannot fully replicate production complexity.

Traffic Mirroring

Modern pipelines integrate traffic mirroring through service mesh technologies such as Istio 3.0. A controlled portion of real production traffic is mirrored to the candidate release. Users experience no visible change. However, the system observes how the new build behaves under authentic load patterns, including unexpected edge cases and real-world data diversity.

The Comparison Engine

An intelligent comparison engine continuously evaluates divergence between the current production version and the candidate release. Latency differentials, error rates, and output discrepancies are measured with precision. Even minor regressions — a 20ms latency increase or a 0.01% deviation in response output — can trigger automatic gating.

If anomalies persist, the system halts progression and may generate an Autonomous Refactor Request — a structured signal that remediation is required before advancement. Deployment decisions are no longer based on human intuition; they are based on quantified behavior.

4. Security: The Zero-Trust Supply Chain

Security in 2026 is not a final stage; it is an identity. Every artifact produced by a pipeline must carry verifiable provenance.

Attestation and Provenance (SBOM + PBOM)

Software Bill of Materials (SBOM) has become standard practice, cataloging every dependency included in a build. However, modern systems extend this concept with the Pipeline Bill of Materials (PBOM) — a cryptographically signed record of the build environment, tooling versions, and execution context.

Production clusters enforce signature validation. Artifacts lacking trusted provenance are rejected automatically. This eliminates entire classes of supply chain attacks and build-time injection vulnerabilities.

Real-Time Policy-as-Code (PaC)

Governance rules are encoded directly into the pipeline. Using Policy-as-Code frameworks such as Open Policy Agent, teams define enforceable standards: no critical vulnerabilities, mandatory review for sensitive domains, strict compliance thresholds for regulated components. The pipeline enforces these policies deterministically. There are no exceptions, no negotiations, and no bypasses.

Security becomes systemic rather than reactive.

5. Progressive Delivery: Decoupling Deploy from Release

Deployment and release are no longer synonymous. Code may be deployed frequently, but user exposure is controlled deliberately.

Automated Canary Analysis (ACA)

Releases begin with minimal traffic exposure — often 1%. Observability systems monitor golden signals continuously. If metrics remain within baseline tolerances, traffic increases incrementally. If anomalies emerge, progression halts automatically. Humans define acceptable thresholds; machines enforce them with precision.

Feature Flag Governance

Feature flags enable granular control but can accumulate as technical debt. Modern pipelines enforce self-extinguishing flags. When a flag reaches full rollout and remains stable for a defined period, the system automatically generates a pull request to remove the obsolete toggle. Technical debt is retired proactively, not forgotten.

6. Operations: AIOps and the Self-Healing Loop

The ultimate goal of modern delivery architecture is operational boredom — not through neglect, but through automation.

Autonomous Rollbacks

When observability detects correlation between deployment timestamps and error spikes, the pipeline executes an immediate rollback. Engineers are notified after mitigation has occurred. The system prioritizes stability before visibility.

Automated Post-Mortems

By the next working day, the pipeline produces a draft post-mortem. Logs, traces, potential root causes, and suggested remediation steps are assembled automatically. Engineers refine rather than reconstruct. Debugging shifts from reactive investigation to forward improvement.

7. The 2026 Metrics: Measuring What Matters

Traditional DORA metrics remain valuable but incomplete. Modern teams measure systemic friction and environmental impact alongside throughput.

Developer Joy (DevEx Score)

Pipeline latency and friction are tracked continuously. If developers spend excessive time waiting on builds or environment provisioning, the system flags platform inefficiencies automatically. Developer experience becomes quantifiable.

Carbon Footprint per Build

Sustainability is now an engineering KPI. Each build produces a carbon receipt. Non-urgent workloads are scheduled during renewable-heavy energy windows. Compute is optimized not only for cost but for environmental impact. Delivery systems acknowledge ecological responsibility.

8. The Tooling Landscape of 2026

Modern ecosystems commonly combine agentic orchestration platforms such as LangGraph, GitOps engines like ArgoCD, ephemeral infrastructure solutions such as vcluster and Crossplane, and observability stacks built on OpenTelemetry with vector-based log analysis. Yet tools alone do not define excellence. Architecture, intent, and governance define maturity.

Summary Checklist for Engineering Leaders

* Eliminate static staging in favor of ephemeral preview environments

* Enforce safety through Policy-as-Code gates

* Mirror real traffic before every release

* Enable autonomous rollback loops

* Generate signed SBOMs and PBOMs for every artifact

In 2026, a good pipeline does not simply automate deployment. It reasons about risk, enforces policy, protects the supply chain, optimizes developer experience, and adapts to real-world behavior. The organizations that master Autonomous Delivery Systems will not merely ship faster — they will ship with confidence.

And in a world where software defines competitive advantage, confidence is everything.

Engineering Team

The engineering team at Originsoft Consultancy brings together decades of combined experience in software architecture, AI/ML, and cloud-native development. We are passionate about sharing knowledge and helping developers build better software.