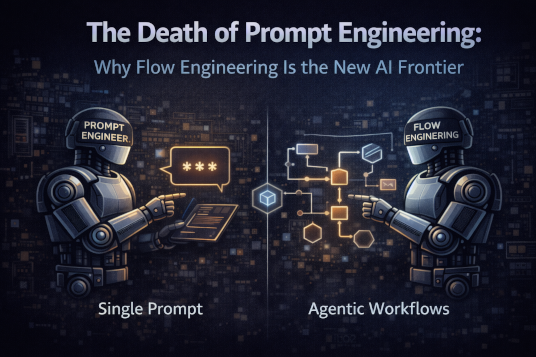

The Death of Prompt Engineering: Why Flow Engineering Is the New AI Frontier

Prompt engineering is no longer enough. Learn why flow engineering and agentic workflows now define how reliable, scalable AI systems are built.

The Death of Prompt Engineering: Why Flow Engineering Defines Production AI in 2026

For nearly two years, the technology industry has been captivated by the belief that intelligence could be unlocked primarily through linguistic precision. The rise of large language models created a powerful illusion: that mastery of phrasing was mastery of the system itself. Entire ecosystems emerged around this idea. Prompt Engineer became a legitimate job title. Companies invested in internal training programs focused not on architecture or observability, but on how to phrase instructions in ways that coaxed better results from probabilistic models. Developers exchanged prompt snippets in online forums the way earlier generations shared CSS hacks or regex tricks, convinced that subtle wording adjustments were the key to unlocking transformative performance.

And in early experimentation, this belief appeared justified. Adding “think step by step” could improve reasoning. Structuring output as JSON could increase reliability. Providing role context such as “you are an expert financial analyst” sometimes improved domain-specific outputs. These improvements were real. However, what was often overlooked was that these gains were incremental optimizations applied to fundamentally unstructured systems. Prompt engineering made models appear more capable, but it did not make them more reliable. As soon as these systems were placed inside production environments — where they interacted with APIs, executed business logic, modified records, or affected customer experiences — the fragility of prompt-centric thinking became painfully clear.

The shift that followed was not gradual. It was structural. Teams that attempted to scale prompt-heavy systems encountered inconsistent outputs, unpredictable costs, limited observability, and failure modes that were impossible to isolate. What had once been clever experimentation now became operational liability. The industry began to recognize that intelligence in production systems does not come from better phrasing; it comes from better structure.

This realization marks the transition from Prompt Engineering to Flow Engineering.

The Benchmark That Broke the Illusion

The shift became undeniable when benchmark results exposed the limits of the prompt-centric worldview. When Andrew Ng discussed results from the HumanEval benchmark, many engineers focused on the headline numbers without fully internalizing their implications.

Single-prompt performance results:

* GPT-4: 67%

* GPT-3.5: 48%

Structured agentic workflow using GPT-3.5:

* 95%+

These results were not the product of a magical new instruction template. They were achieved by restructuring the execution model around the language system. Tasks were decomposed into stages. Planning was separated from execution. Verification was introduced as an explicit step. Tool calls were integrated deliberately rather than implicitly assumed. The weaker model outperformed the stronger model not because it had become more intelligent, but because the system surrounding it had become more disciplined.

This benchmark reveals a profound architectural truth: model capability is no longer the dominant variable in many production scenarios. System design is. The lesson mirrors a pattern long understood in traditional software engineering. A poorly structured codebase written by brilliant engineers will still collapse under complexity. Conversely, a well-architected system built with average components can produce exceptional reliability. Flow Engineering applies this principle to AI systems. The model becomes a component inside a structured machine rather than the machine itself.

Why Prompt Engineering Fails at Scale

Prompt engineering fails not because prompts are useless, but because they cannot shoulder the structural responsibilities required in production systems. The failure modes are systemic.

First, non-determinism becomes unacceptable. A single prompt invocation can produce slightly different outputs across runs, even when the input appears identical. In experimental settings, this variability may be tolerable. In regulated enterprise workflows, it is not. When outputs influence financial transactions, compliance reports, or automated communications, small inconsistencies compound into operational risk.

Second, prompt-based systems lack failure isolation. When planning, reasoning, tool invocation, and formatting are combined into a single generation step, it becomes impossible to identify which conceptual stage failed. Did the reasoning step hallucinate? Did the tool invocation parameters misalign? Did formatting corrupt structured output? With a monolithic prompt, these concerns are inseparable.

Third, prompt-centric systems lack meaningful observability. You cannot easily measure token usage per reasoning phase because there are no phases. You cannot optimize latency for planning independently of execution because everything occurs in a single opaque generation. Cost, performance, and reliability remain entangled.

Finally, prompt blobs resist versioned logic. You can version the entire prompt string, but you cannot independently evolve planning logic while preserving execution semantics. This makes incremental improvement fragile and regression-prone.

Prompt engineering optimizes language.

Flow engineering optimizes systems.

Flow Engineering: A Systems Perspective

Flow Engineering begins with a conceptual reframing. Instead of viewing the LLM as a monolithic reasoning entity, it treats the model as a reasoning primitive inside an execution graph. The distinction is subtle but decisive.

The naive architecture looks like this:

User Input → Giant Prompt → OutputThe structured architecture looks like this:

User Input

↓

Planning Node

↓

Tool Retrieval Node

↓

Execution Node

↓

Validation Node

↓

Formatting Node

↓

Final OutputThe difference is not merely aesthetic. Each node in the structured architecture represents a boundary of responsibility. Planning can be evaluated independently. Tool retrieval can be measured for latency and cache efficiency. Execution can be retried conditionally. Validation can enforce deterministic constraints before output is exposed to users. Formatting becomes a final translation layer rather than an entangled component of reasoning.

This transformation converts stochastic text generation into a structured, inspectable decision pipeline.

Reference Architecture: Production-Grade Flow

A practical reference architecture illustrates how this decomposition functions in real systems.

┌────────────────────┐

│ Input Normalizer │

└─────────┬──────────┘

↓

┌────────────────────┐

│ Planner Agent │

└─────────┬──────────┘

↓

┌────────────────────┐

│ Execution Graph │

│ (tools + subagents)│

└─────────┬──────────┘

↓

┌────────────────────┐

│ Critic / Verifier │

└─────────┬──────────┘

↓

┌────────────────────┐

│ Output Composer │

└────────────────────┘The Input Normalizer ensures consistent structure before reasoning begins. The Planner Agent converts ambiguous intent into structured steps. The Execution Graph orchestrates tool calls and subagents. The Critic verifies constraints and catches logic drift. The Output Composer translates verified internal results into human-readable form.

This separation introduces accountability at every stage.

Implementation Example: Minimal Flow Engine (Pseudo-Code)

The architectural principles above become concrete when expressed as executable structure.

class FlowEngine:

def run(self, user_input):

normalized = self.normalize(user_input)

plan = self.planner.generate_plan(normalized)

if not self.validate_plan(plan):

raise Exception("Invalid plan structure")

results = []

for step in plan.steps:

result = self.execute_step(step)

results.append(result)

verified = self.critic.verify(results)

if not verified.passed:

return self.retry_with_feedback(verified.feedback)

return self.format_output(results)The critical insight here is that reasoning is not conflated with execution. Planning generates a structured contract. Validation enforces type safety. Execution occurs stepwise. Verification ensures constraints are satisfied before the output is finalized. Retry logic is bounded and conditional, preventing runaway token consumption.

This is not prompt tuning.

This is architectural governance.

Pattern 1: Reflection Loop with Hard Limits

Reflection loops formalize self-critique as a controlled mechanism rather than an open-ended conversation.

def reflection_loop(generator, critic, input_data, max_iterations=3):

attempt = generator.generate(input_data)

for i in range(max_iterations):

feedback = critic.evaluate(attempt)

if feedback.is_valid:

return attempt

attempt = generator.revise(attempt, feedback)

return attemptThe crucial design element is the iteration cap. Without it, cost escalates and termination becomes uncertain. Reflection must be structured and bounded. When implemented correctly, it reduces hallucination rates and improves logical consistency while preserving cost discipline.

Pattern 2: Explicit Planning Before Execution

Explicit planning transforms reasoning from implicit prose into structured data.

def planning_prompt(user_input):

return f"""

Decompose the following task into structured steps.

Return JSON format:

{{

"steps": [

{{"type": "tool", "name": "...", "arguments": {{}}}},

{{"type": "reasoning", "description": "..."}}

]

}}

Task: {user_input}

"""Validation enforces schema compliance:

def validate_plan(plan):

assert isinstance(plan["steps"], list)

for step in plan["steps"]:

assert "type" in stepThis converts reasoning into an enforceable contract. Plans can be logged, audited, tested, and versioned independently of execution logic.

Pattern 3: Tool-Grounded Reasoning

Tool grounding replaces speculation with verification.

if step.type == "tool":

tool = tool_registry.get(step.name)

result = tool.run(**step.arguments)By forcing the system to retrieve authoritative data rather than hallucinate plausible answers, reliability increases dramatically. Mature systems measure tool utilization rates, latency impact, and hallucination reduction as first-class metrics.

Cost Engineering: The Overlooked Variable

Cost control is not an afterthought in production AI. It is an architectural constraint.

| Approach | Avg Tokens | Error Rate | Retry Cost |

|---|---|---|---|

| Single Prompt | 3,500 | 18% | High |

| Structured Flow | 1,200 per stage | 5% | Controlled |

Although total token usage may appear similar, structured flows isolate retries to failed stages rather than re-executing entire prompts. Over time, this dramatically reduces cumulative spend and variance.

Flow Engineering is reliability engineering.

It is also cost engineering.

Observability: Treat AI Like Distributed Systems

Structured logs per stage transform AI from opaque artifact into observable system:

{

"stage": "planner",

"tokens_used": 420,

"latency_ms": 890,

"status": "success"

}With stage-level telemetry, teams can:

* Detect bottlenecks

* Compare regression performance

* Enforce cost budgets

* Diagnose failure patterns

Monolithic prompts cannot provide this granularity.

Multi-Agent Specialization: Controlled Autonomy

Generalist agents create cognitive overload. Specialized agents reduce domain drift.

* Planner → decomposes

* Researcher → retrieves

* Executor → performs

* Critic → validates

* Formatter → presents

Each operates with scoped permissions, reinforcing the principle of least privilege. Reliability scales through specialization, not generalization.

Conclusion: Architecture Is the New Intelligence

The prompt era accelerated experimentation, but it cannot support long-term production demands. Flow Engineering transforms language models from probabilistic text generators into structured decision engines.

Prompts remain important.

But flows are foundational.

Architecture is now the primary differentiator in applied AI systems. Teams that design execution graphs, enforce stage isolation, instrument observability, and control cost will outpace those still optimizing adjectives.

The model is an engine.

The flow is the machine.

Engineering Team

The engineering team at Originsoft Consultancy brings together decades of combined experience in software architecture, AI/ML, and cloud-native development. We are passionate about sharing knowledge and helping developers build better software.

Related Articles

Building Production-Ready AI Agents: A Complete Architecture Guide

In 2026, the gap between AI demos and real tools is defined by agency. This guide explains how to architect, orchestrate, and operate AI agents that can be trusted in production.

Building Intelligent Swarm Agents with Python: A Dive into Multi-Agent Systems

Swarm agents and multi-agent systems let specialized agents collaborate to solve complex workflows. This article walks through a Python implementation and explores why MAS matter.

Unlocking the Power of CrewAI: A Comprehensive Guide to Building AI-Driven Workflows

A practical guide to building multi-agent workflows with CrewAI—how agents, tasks, crews, and tools fit together, plus six real scenarios like job search automation, lead generation, and trend analysis.